Identity and Access Governance (IGA): Definition & Differentiation Explained

Identity is now the most common entry point for attackers. In cloud-native environments, thousands of microservices, containers, and agents request credentials every day, and each one represents a potential weakness. The imbalance between human and non-human identities (NHIs) is growing, but many organizations still devote the bulk of their identity and access governance (IGA) efforts to the former.

Over the past two years, 57% of organizations experienced at least one API-related breach; of those, 73% saw three or more incidents. At the same time, the global IAG market was valued at approximately $8 billion in 2024, driven by compliance frameworks such as SOC 2, GDPR, HIPAA, and CCPA that demand auditable proof of access controls.

The takeaway: static defenses built on logins and standing permissions can’t keep pace with identities that appear and disappear daily. For engineering teams, identity and access governance has shifted from a “nice-to-have” to a baseline requirement for both security and trust.

What is identity and access governance (IGA)?

Identity and access governance (IGA) is the framework your organization can use to decide who should have access to systems, applications, and data, and whether that access is still appropriate. IGA goes beyond the mechanics of logging and instead focuses on oversight, accountability, and policy enforcement.

Most IGA programs are built around a few core practices:

- Identity lifecycle management: Provisioning, modifying, and deprovisioning accounts.

- Role and entitlement management: Grouping permissions and enforcing least privilege.

- Access reviews and certifications: Recurring checks to validate appropriateness of access.

- Compliance reporting: Generating evidence required by auditors and regulators.

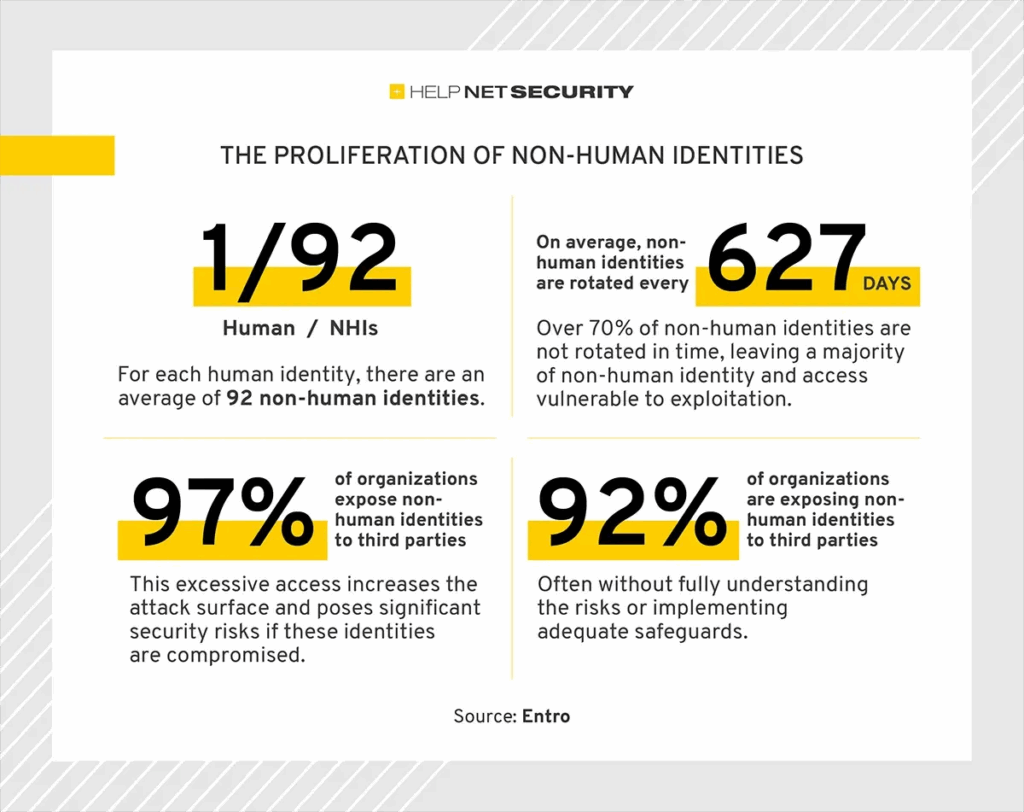

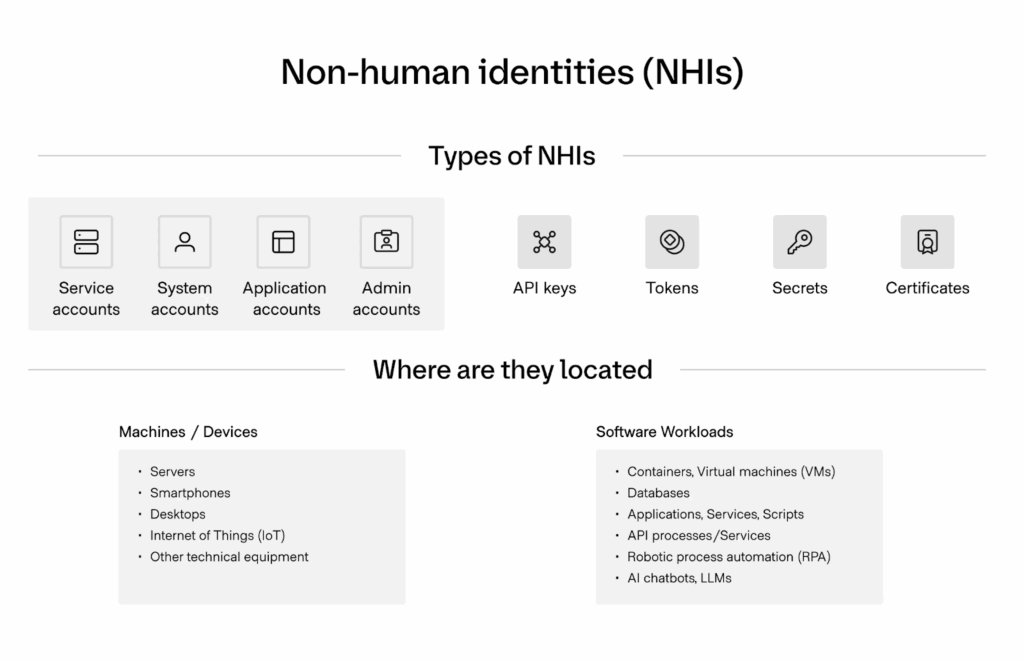

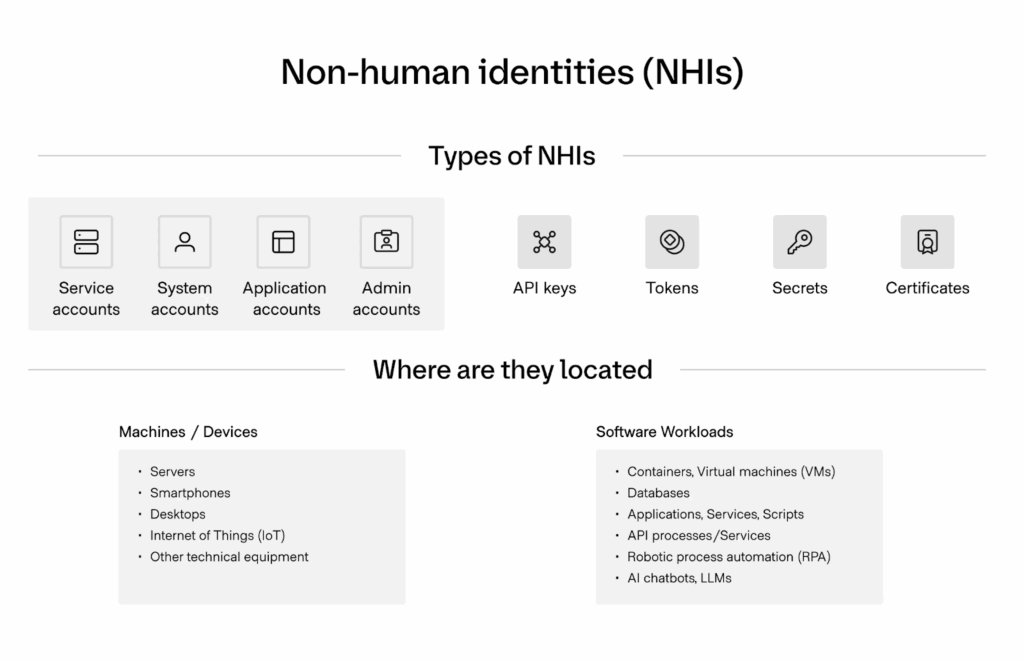

Unlike identity and access management (IAM), which enforces access at runtime, IGA asks the harder question: should this access exist at all? Answering this question is harder today because identities are multiplying. Machine identities outnumber humans by over 80 to 1, making them one of the fastest-growing risk classes in cloud-native environments. Unlike human accounts, NHIs rarely go through onboarding or offboarding, rely on static API keys or long-lived tokens, and are frequently overprivileged—the perfect storm for attackers.

Core capabilities of Identity and Access Governance

IGA is about ensuring access is both appropriate, accountable, and, most importantly, auditable. To achieve these three pillars, IGA platforms bring together several capabilities.

- Access reviews and certification: Periodic checks give managers and system owners the chance to confirm that permissions are still valid. They’re meant to clean up access left behind after job changes, project work, or employee turnover.

- Role and entitlement management: Permissions are grouped into roles to make administration manageable. This model keeps access consistent across teams and reduces the scatter of exceptions that creep in over time.

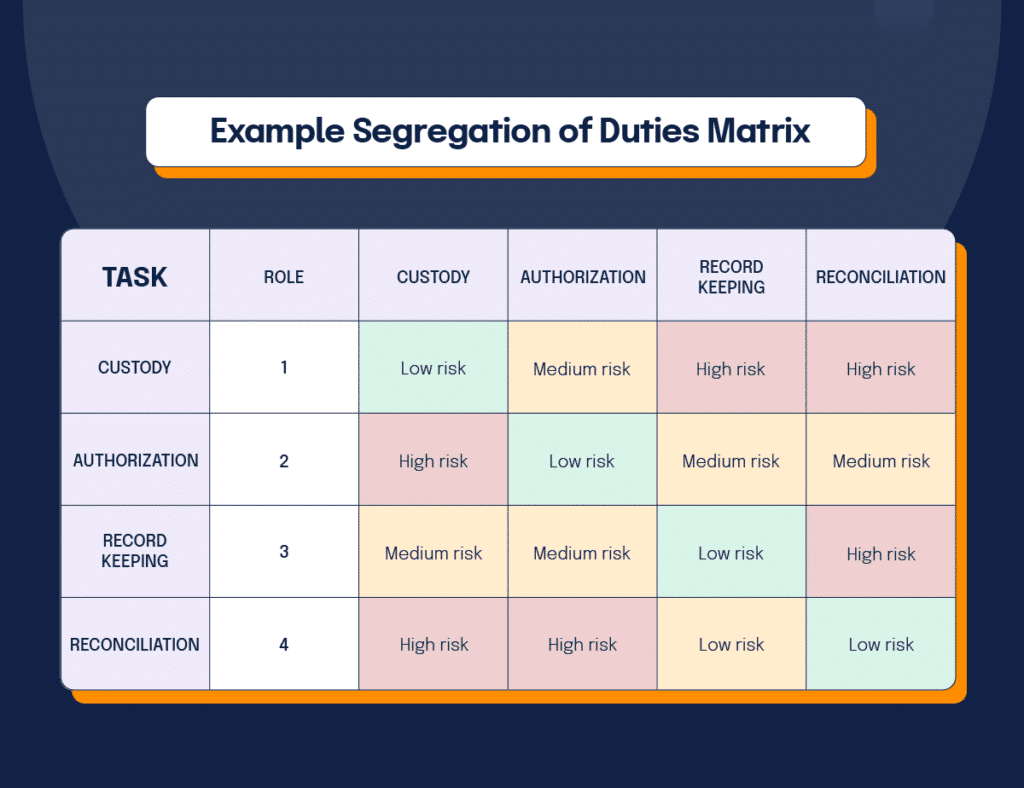

- Separation of Duties (SoD): SoD prevents conflicting privileges so that no single identity has the ability to commit fraud or bypass checks.

- Audit and compliance reporting: Most frameworks, from SOC 2 to GDPR, require proof that access is being governed. Automated reports provide that evidence and complement broader vulnerability management programs designed to reduce risk.

- Delegated administration and approval workflows: Requests can be routed to business or technical owners who best understand whether access makes sense. This step spreads responsibility more evenly, while decisions remain logged centrally.

Crucially, modern IGA extends these capabilities beyond human users to include NHIs, ensuring service accounts and automation agents undergo the same scrutiny as employees.

IGA, IAM, and PAM Compared

Identity management has grown into a set of overlapping disciplines, each with its own focus. Many people still use the terms interchangeably, but this approach can blur the lines between strategic governance and privileged account protection.

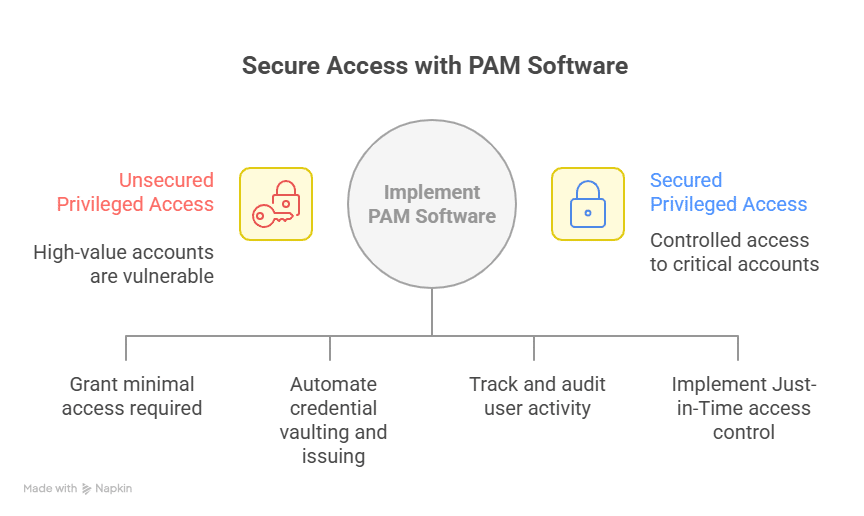

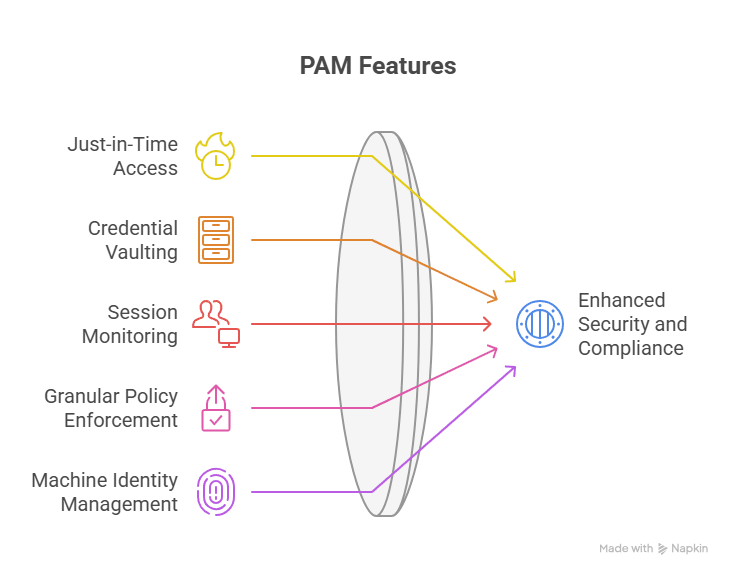

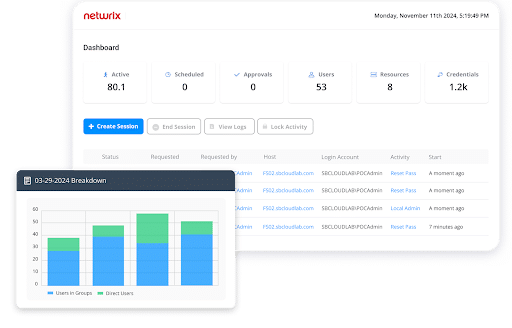

It’s helpful to understand exactly where each begins and ends. IAM is concerned with authentication and access control at the point of login. IGA adds oversight, certification, and auditability across all identities. To monitor and control their activity, privileged access management (PAM) narrows in on the riskiest accounts, such as administrators and root users. For example, organizations rely on PAM software to enforce controls around these sensitive accounts, ensuring that high-risk permissions are granted only when necessary and closely monitored.

Table 1: IGA vs IAM vs PAM

| Discipline | Focus | Typical Scope | Key Purpose |

| IAM | Enforcement | Authentication, MFA, SSO | Prove identity and control access at login |

| IGA | Governance | Human and non-human identities | Define, review, and certify who should have access and why |

| PAM | Privilege | High-risk administrator and root accounts | Control and monitor privileged sessions |

5 Challenges of Implementing IGA in Cloud-Native Environments

1. Scaling Ephemeral Identities

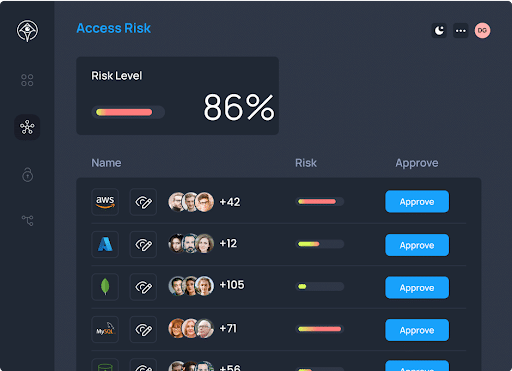

In a cloud-native stack, thousands of containers, pods, and serverless functions may launch and terminate within minutes. Each instance often requires its own token or temporary credential to function. Legacy governance processes that rely on quarterly or monthly reviews cannot track this churn, so permissions are left unchecked. Security teams end up with audit trails that miss most of the short-lived identities, which makes proving compliance or investigating incidents almost impossible. A best practice to overcome this challenge is to use a cloud-native access management solution like Apono, which automates JIT access and generates granular audit logs, so even short-lived identities are governed in real time.

2. Complex Permissions

Cloud providers like AWS, Azure, and GCP offer permission systems with thousands of individual actions that can be combined into highly customized roles. Developers frequently over-provision roles because mapping business tasks to such granular entitlements is too time-consuming. Over time, these permission sprawl problems multiply, creating toxic combinations that static governance models don’t properly evaluate.

3. Friction with Development Teams

When engineers need access to a production database or a new cloud service, the request usually goes into a ticket queue. When reviews take too long, teams are forced to delay work or find workarounds such as borrowing credentials.

This bottleneck not only slows delivery but also weakens governance because security becomes seen as a blocker rather than a partner. In some organizations, administrators pre-approve broad entitlements “just in case.” This mistake undermines the entire principle of least privilege and increases the chance of compromised credentials being abused across environments.

4. Non-Human Identities

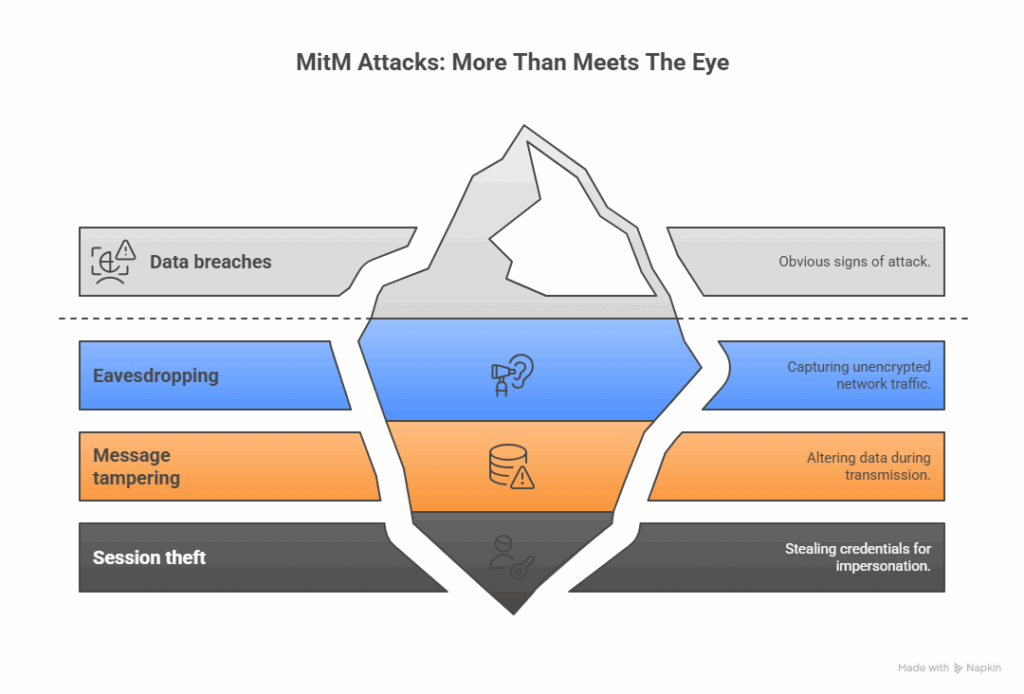

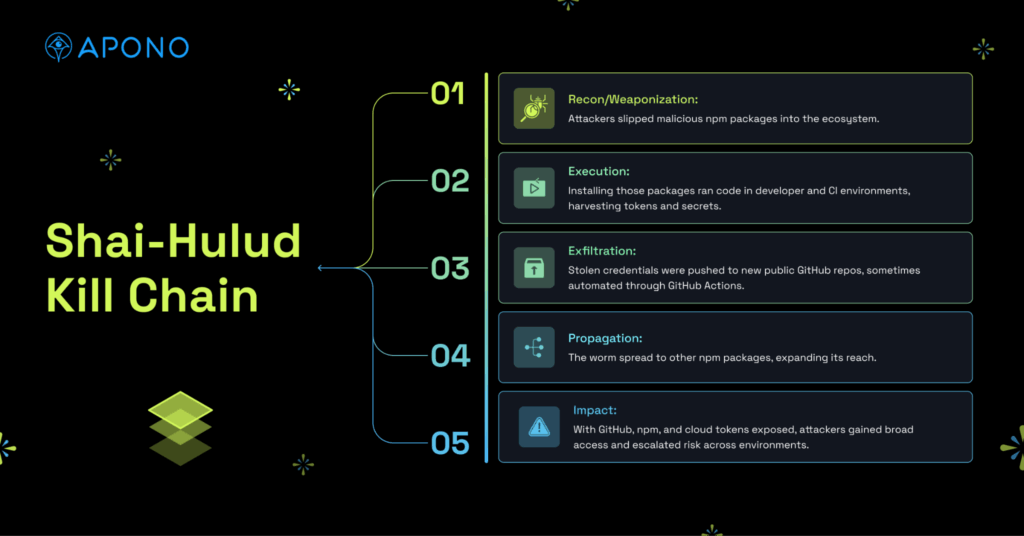

Unmonitored NHIs are among the most consistent attack vectors in identity-driven breaches today. Service accounts and automation agents run critical workflows in CI/CD pipelines, monitoring systems, and infrastructure tools. These identities often carry long-lived credentials with powerful permissions. Unlike human users, they rarely leave the organization, so deprovisioning processes don’t catch them.

When one of these accounts is forgotten or left unmonitored, it becomes a permanent backdoor. Attackers frequently target exposed API keys or tokens for this reason, knowing they are less likely to be rotated or reviewed. As we’ve seen with emerging issues like the MCP protocol, unsecured machine-to-machine communications can further amplify the risks of unmanaged NHIs.

Recent examples include Microsoft’s 2023 SAS Token Leak, where researchers inadvertently published a token that exposed 38TB of internal data, and the BeyondTrust API Key Breach in 2024, where attackers exploited an overprivileged, static key to reset passwords and escalate privileges. Both incidents highlight how unmanaged non-human identities can open the door to large-scale compromise.

An essential NHI security best practice is to run a Cloud Access Assessment to uncover risks in your AWS environment, provided by Apono at no cost (for a limited time only). Apono’s platform is built to close this blind spot by enforcing JIT and JEP policies for NHIs just like human accounts, stopping long-lived keys from becoming backdoors.

5. Fragmented Visibility

Most enterprises work across multiple clouds, each with its own identity console and reporting format. Security teams trying to answer “who can access sensitive data” are forced to stitch together incomplete reports. The lack of a unified view leaves gaps for auditors and prevents real-time oversight—a challenge that becomes even more critical in industries like FinTech or government, which are subject to additional compliance requirements like CUI Basic.

How Modern IGA is Evolving

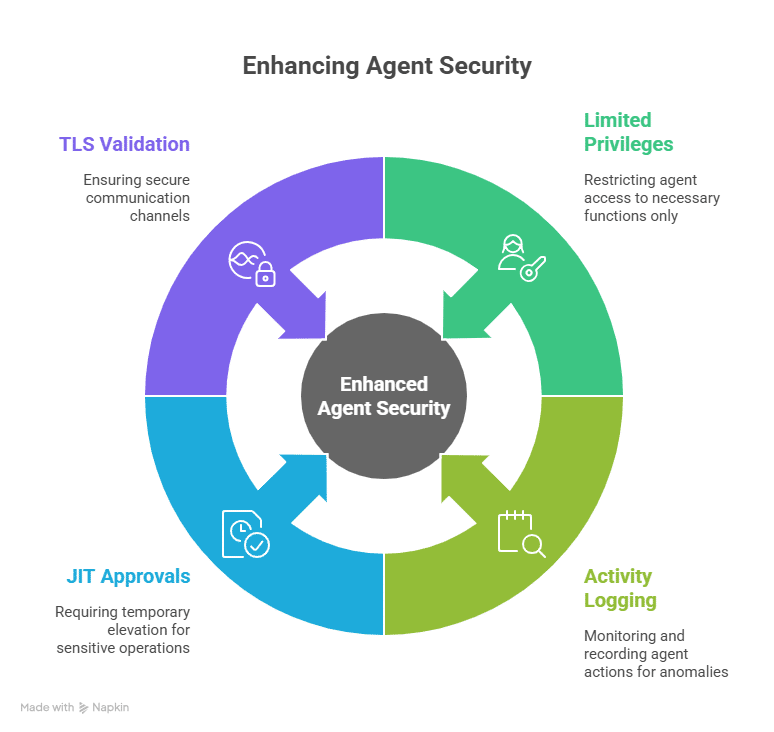

Identity governance is moving from periodic checks to continuous oversight. Instead of leaving broad permissions in place and revisiting them months later, newer approaches shift towards:

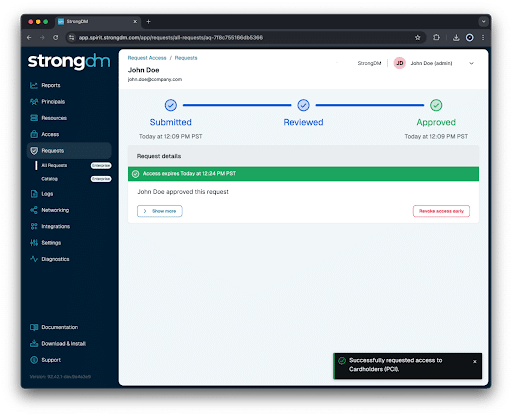

- Just-in-Time access (JIT): Temporary access that expires automatically and reduces the window of risk while giving auditors a clearer picture of how access is actually being used. JIT access automation and contextual approval workflows are essential for scaling governance without undermining developer productivity.

- Zero Trust: Assumes no identity should have standing access by default. Every request must be verified in context, regardless of whether it comes from a human developer or a bot in a CI/CD pipeline.

- Just-Enough Privileges (JEP): JEP is particularly important for NHIs. JEP grants the minimum rights needed for a task for the shortest possible time. This shift addresses the chronic overprovisioning of machine identities, aligns with Zero Trust, and directly reduces the blast radius of a potential compromise.

- Workflow integration: Approvals embedded into Slack, Teams, or CLI so governance fits into daily developer workflows.

By enforcing just-in-time access and contextual approvals, IGA reduces the standing permissions that often undermine API security in CI/CD pipelines and cloud workloads.

Bringing Automation to the Center of Governance with Apono

Cloud-native deployments and the explosion of non-human identities have pushed traditional identity governance past its limits. Static reviews and manual approvals leave too much standing access in environments where roles and permissions change constantly. To reduce risk, governance needs automation, time-bound access, and policies that apply equally to people and non-human accounts.

Apono redefines IGA for cloud-native teams. It eliminates risky standing permissions for both human and non-human identities, while ensuring compliance frameworks increasingly require full visibility into NHI governance. Apono’s platform automates JIT and JEP to eliminate standing permissions, generates granular audit logs for compliance, and applies governance equally to human and non-human identities. Approvals flow directly through Slack, Teams, or CLI—every action logged, every change auditable.

With built-in break-glass and on-call flows, and deployment in under 15 minutes, Apono delivers Zero Trust governance at the speed of modern infrastructure.

Ready to Eliminate Standing Access Risk?

Apono closes the gap by automating JIT and JEP for both human and non-human identities — stopping long-lived keys from becoming backdoors.Download The Security Leader’s Guide to Eliminating Standing Access Risk to see how leading cybersecurity companies are rethinking access control.