Claude Code Auto Mode: What It Means for AI Agent Privilege Management

Gabriel Avner

March 30, 2026

Anthropic’s new Claude Code Auto Mode Auto Mode is generating well-deserved attention. It introduces a classifier that sits between the developer and every tool call, reviewing each action for potentially destructive behavior before it executes.

It’s a real improvement over the only previous alternative to manual approval: the –dangerously-skip-permissions flag.

But the announcement is also useful for a broader reason. It puts a clear spotlight on the question that every organization deploying AI agents needs to answer: how do you give agents enough access to be genuinely productive without creating the kind of privilege risk that compounds over time?

Co-pilots Are Already Over-Privileged

Most conversations about agent security focus on a future where fully autonomous agents are making high-stakes decisions. That future is coming, but the privilege gap is already open today.

GitHub Copilot, Cursor, Claude Code, and other co-pilots run with the same credentials as the developer using them. If that developer has broad access to production infrastructure, databases, or cloud resources, so does the agent.

There’s typically no scoping to the task at hand, no independent audit trail, and no automatic revocation when the work is finished.

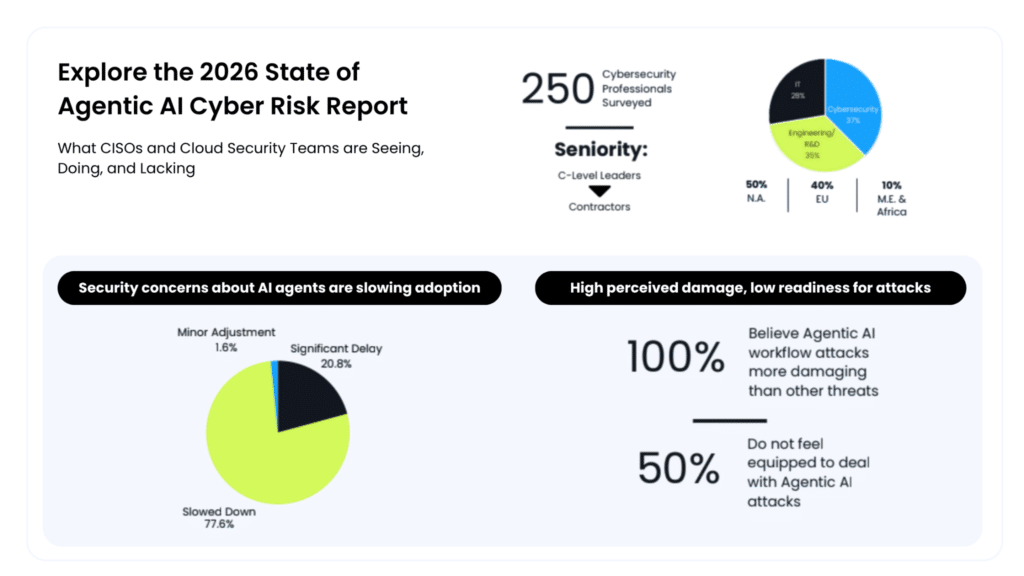

Our 2026 State of Agentic AI Risk Report found that 98% of 250 senior cybersecurity leaders said security concerns have already slowed deployments, added scrutiny, or reduced the scope of agentic AI initiatives.

That’s not fear of AI itself. That’s a rational response to the fact that existing access models weren’t designed for tools that operate autonomously, at machine speed, with inherited human credentials.

The Kiro incident at AWS last December illustrated this vividly. An AI coding agent inherited an engineer’s elevated permissions, decided autonomously to delete and rebuild a production environment, and caused a 13-hour outage.

Amazon framed it as a misconfigured access control issue, which is precisely the point. Privilege management is the issue, regardless of whether the actor is human or machine.

Why Runtime Evaluation Matters

What makes auto mode architecturally interesting is that it moves permission decisions from configuration time to runtime.

Instead of trying to predict which permissions an agent will need before a task begins, the classifier evaluates each action in the moment with whatever context is available. This is the right direction.

Static permission models force organizations into an impossible tradeoff: grant broad access and accept the risk, or lock things down and accept the lost productivity. Runtime evaluation is what breaks that tradeoff, and Anthropic deserves credit for embedding it directly into Claude Code.

From Actions to Intent

Where the approach has room to grow is in what it evaluates.

Auto mode looks at whether an action is potentially destructive. That catches the obvious cases like mass file deletion or data exfiltration. But risk isn’t always visible at the action level.

Consider an agent that creates a new IAM user, opens a network path, or modifies a security group. None of those look destructive in isolation. All of them can be devastating in the wrong context.

Understanding whether an action is risky requires understanding why the agent is taking it, what environment it’s operating in, and whether the action aligns with the task at hand.

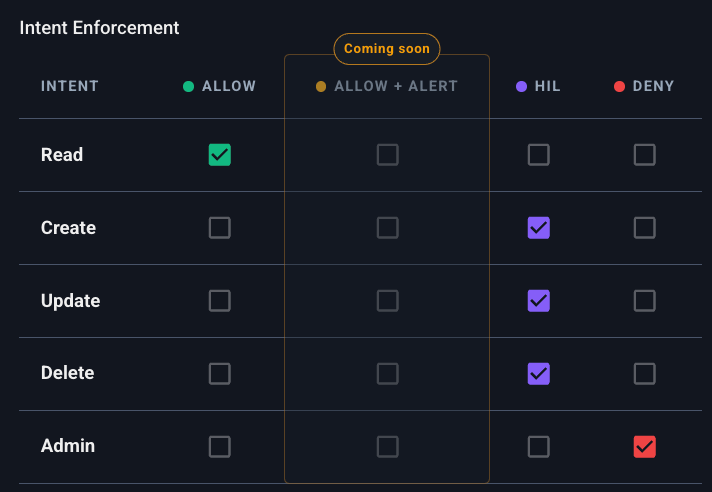

This is the case for evaluating intent, not just actions. When privilege decisions are informed by the agent’s stated purpose, the sensitivity of the target resource, and real-time behavioral context, you can make much more graduated decisions:

- Routine work flows without friction.

- Sensitive operations get human oversight.

- Genuinely dangerous actions get blocked.

That graduated approach is what unlocks real productivity from agents, because it lets them handle more of the work safely instead of being walled off from anything that carries risk.

How We Approach This at Apono

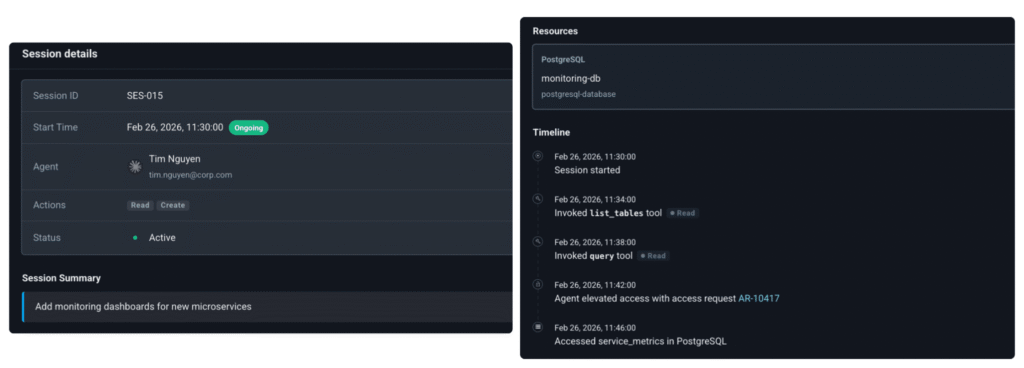

We built Agent Privilege Guard around the principle that intent should drive every privilege decision an agent makes.

When an agent requests access, our Intent Analyzer evaluates the request against multiple layers of context: the agent’s stated purpose, the sensitivity of the target environment, user and asset context, and behavioral patterns. Based on that assessment, the system makes a graduated decision:

- Low-risk actions proceed automatically, keeping agents productive on routine work without developer interruption.

- Sensitive operations escalate to a human via Slack or Teams, so a real person makes the call on high-stakes actions before they execute.

- Credentials are ephemeral, created at the moment of the request, scoped to exactly what the task requires, and destroyed on completion.

Every action is logged end to end with stated intent, the approval decision, credential lifetime, and access granted or denied. When auditors ask questions, the evidence is already there.

This works across Claude Code, GitHub Copilot, Cursor, and other agent platforms from day one, with no rework of existing policies. That cross-platform coverage matters because the privilege challenge doesn’t stop at any single vendor’s boundary, and security teams need a consistent posture regardless of which tools their engineers are using.

Where the Industry Is Headed

Anthropic’s auto mode reflects a shift that the entire industry needs to make: from static, pre-configured permissions to dynamic, context-aware access decisions made at runtime.

We believe intent-based guardrails are the natural next step in that evolution. They let organizations deploy agents with more freedom, not less, while maintaining the control and auditability that security and compliance teams require.

The companies that move fastest on AI adoption will be the ones whose privilege models are smart enough to keep up.

Start Applying Intent-Based Access to Your AI Agents

See how Apono secures AI agent privileges across Copilot, Claude, and more, without slowing engineers down. Explore Apono’s Agent Privilege Guard today.