6 Data Governance Principles You Need to Know

The Apono Team

February 19, 2026

6 Data Governance Principles You Need to Know

At some point, something bad always happens. Incidents like NHI sprawl and data ownership are always preventable. A supply chain attack finds its way either through upstream infiltration or downstream delivery. However, despite being aware of this, the problem persists.

54% of large organizations see supply chain challenges as a barrier to cyber resilience. There is complexity and interdependency among different systems, software, and teams that require access to one another. When security is lax in one instance, it creates a potential point of attack for the entire system.

It’s the weakest link at play. Data governance exists to identify access weak points and actively eliminate them before standing permissions and unmanaged identities turn into an attack path.

What is data governance?

Data governance defines and orchestrates who can access data, for what purpose, under what conditions, and how that access is monitored and audited. It governs data throughout its entire lifecycle, from creation to deletion, to form the basis of identity and access governance. For DevOps teams, it must be enforced directly at the access layer through automation and Just-In-Time controls, rather than being discovered after the fact through audits.

As cloud environments scale, non-human identities (NHIs) such as service accounts, CI/CD runners, workload identities, and AI agents now outnumber human users in many organizations.

When these identities are over-permissioned, long-lived, or lack clear ownership, they become standing access paths that bypass governance entirely and expand blast radius by default. Token sprawl and unmanaged credentials dramatically expand the attack surface and enable lateral movement if a single identity is compromised, making non-human identities a high-impact vector for modern cyber threats.

| Discipline | Key Question | Primary Focus |

| Governance | Who decides, and what are the rules? | Strategy, Policy, Accountability |

| Management | How do we move and store it? | Operations, Integration, Storage |

| Security | How do we block intruders? | Encryption, Defense, Hardening |

| IAM | Is this the right user? | Identity, Authentication, Permissions |

| Compliance | Are we meeting the law? | Legal mandates, External Audits |

Why Data Governance Matters for Security and Compliance

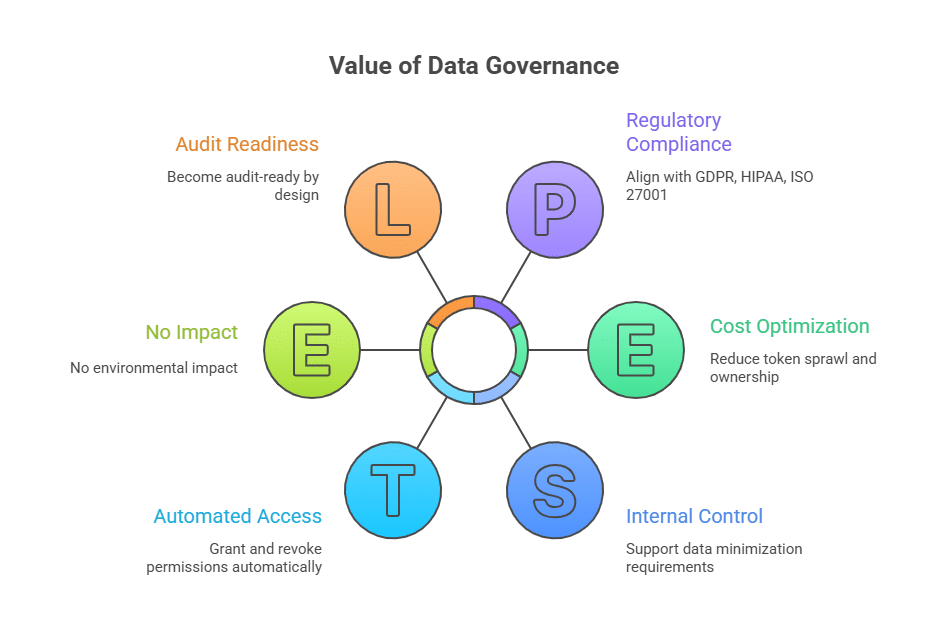

Centralized identity registries and least-privilege policies only work effectively when paired with Just-In-Time (JIT) access and automated access control, which automatically grants and revokes permissions. In this way, you can directly address common NHI failure modes such as token sprawl and unclear ownership.

Enforcing time-bound, purpose-specific access aligns data governance with regulatory requirements, including GDPR, HIPAA, and ISO 27001. Both human and non-human identities can access only the data required for a specific task, therefore supporting internal control and data minimization requirements by default.

When you eliminate standing access, data governance becomes audit-ready by design. Every permission is time-limited and logged automatically for faster root-cause analysis and clear evidence of why access controls prevented an incident.

In cloud-native environments dominated by non-human identities, data governance is unenforceable without JIT because standing permissions erase ownership, purpose, and auditability by default.

6 Data Governance Principles You Need to Know

1. Clear Data Ownership and Accountability

Clear data ownership and accountability are the formal assignment of authority and responsibility for specific individuals or teams. Ownership makes access traceable, and accountability ensures compliance with regulations such as GDPR and HIPAA.

How to implement:

- Define and assign specific roles to avoid ambiguity. For example, the ultimate data owners are senior leaders with the most accountability and decision rights.

- Use automation to make ownership visible and enforceable through data catalogs, which are centralized metadata of ownership information. Also, use AI-driven monitoring to automate flagging of quality issues and detection of non-compliance.

2. Least-Privilege and Purpose-Based Access

Least privilege gives the absolute minimum permissions required to perform the specific, scoped task. The core concept behind least privilege is that access is denied to everything by default and permission is incrementally added as needed. Purpose-based access adds context to least-privilege by creating the scope for existence and justification behind the request for access.

Least-privilege and time-bound access are especially critical at the database layer, where over-permissioned credentials are a common source of data exposure. Automating secure database access with just-in-time permissions gives developers and services the ability to query sensitive data only when required, and only for the exact scope of their task.

How to implement:

- Establish a baseline of zero access by setting access to the lowest possible privilege level, and conduct regular audits to prevent privilege creep over time through scheduled, frequent automated access reviews. (eg, every 30 or 90 days).

- Define purpose-based policies that require users to select a purpose when requesting access. (eg, financial audit, marketing analysis) The policies should adhere to regulatory requirements for data access and its classification levels.

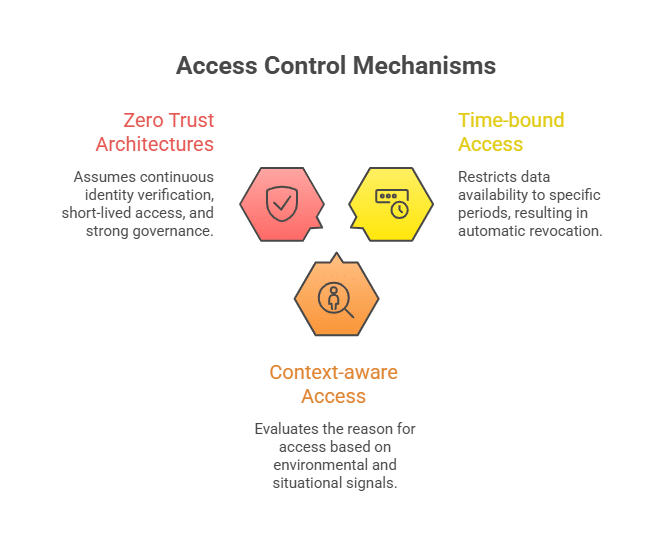

3. Time-Bound and Context-Aware Access

Time-bound aligns with the principle of Just-In-Time (JIT) access, which restricts data available to specific periods or pre-defined durations, resulting in automatic revocation. Context-aware access evaluates the reason for access based on environmental and situational signals.

These mechanisms are core to Zero Trust architectures, which assume continuous identity verification, short-lived access, and strong governance over both human and non-human identities to protect cloud data security at scale.

How to implement:

- Combine JIT access with temporal policies that establish limited life accessibility, especially elevated privileges, so access is decayed over time and forced to rotate.

- Establish access levels based on attributes such as device posture, geography, network, and user identity. Link defined access levels to specific sensitive applications and data sets to give context awareness.

4. Data Classification and Sensitivity Awareness

Data classification is the systematic process of categorizing information based on its sensitivity, allowing access policies, encryption requirements, and approval workflows to be enforced consistently according to risk level. This allows organizations to prioritize security measures such as MFA for high-risk data.

Sensitivity awareness is the process of implementing and maintaining a ‘privacy-first’ culture, where every employee understands their role in data protection and can be used to prevent accidental exposure through the use of AI tools.

How to implement:

- Use autonomous discovery to continuously index data stores, including unstructured data in email, chat apps, and cloud buckets. Combine this with natural language processing (NLP) models to understand the semantic meaning of data in order to filter the difference between generic business contracts and high-value trade secrets.

- Strengthen local stewardship by assigning domain data owners (ie, experts in their specific data areas such as finance or HR) and assigning them as the primary responsible for their ongoing accuracy and privacy.

5. Auditability and Traceability

Data traceability is the ability to follow a piece of data through its entire lifecycle. Auditability refers to the ability to verify that data governance policies were implemented correctly. These two factors matter, as traceability helps with the verification of evidence that your governance rules were implemented.

How to implement:

- Continuous data flow capture that integrates directly into your data stack, automatically discovers and maps data flows in real time.

- Implement immutable audit logs to store tamper-proof evidence and establish a chain of custody for data. To do this, you can turn to automated evidence collection, making it easier to access and analyze as needed.

6. Automation Over Manual Processes

Manual governance often relies on spreadsheets, static PDF policies, and human-led stewardship. This approach can lead to critical failure points, including human error, scalability gaps, and outdated information. Automation must extend beyond data discovery to the access layer, ensuring permissions are granted dynamically and continuously aligned with purpose and risk.

How to implement:

- Use active metadata platforms to act as an intelligence layer through automatic enrichment. For example, automatic inference and enriched metadata based on data lineage.

- Implement autonomous remediation if the quality check fails or it detects schema drifts. Adaptive access through AI agents can continuously monitor access patterns and adjust permissions in real time based on situational context (within predefined policy boundaries and approval thresholds).

How to Operationalize Data Governance Principles in the Cloud

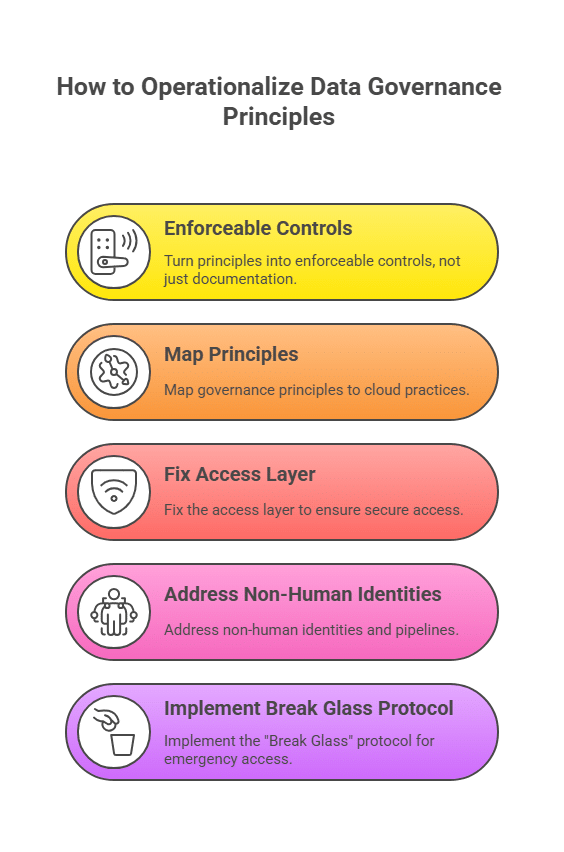

1. Turn Principles into Enforceable Controls and Not Documentation

Codify policies to replace manuals with Policy-as-Code (PAC) so rules are version-controlled and deterministic. This approach will shift left governance checks to block non-compliant infrastructures before they are ever deployed. Automating guardrails, such as cloud-native Service Control Policies (SCPs), will establish hard boundaries to prevent users from performing prohibited actions.

2. Map Governance Principles to Cloud Practices

Use identity governance software to grant temporary and task-specific permissions that expire automatically for JIT access and ensure least privilege. Restrict resources to specific geographical regions for data sovereignty and accountability. Mandate Customer Managed Keys (CMK) for all data-at-rest and block any storage bucket creation that uses a default provider-managed encryption for confidentiality and automated encryption.

3. Fix the Access Layer

Use a Zero Standing Privileges (ZSP) model so no human or non-human identity retains access by default, reducing lateral movement and limiting supply chain risks when one system or dependency is compromised.

When access is required, use Attribute-Based Access Control (ABAC), which grants access based on contextual attributes and, when paired with centralized policy management, avoids the role explosion and policy sprawl common in static RBAC. ABAC checks the subject, resource, action, and environmental attributes to determine accessibility rather than just belonging to a particular group.

4. Address Non-Human Identities and Pipelines

Don’t use ‘forever’ keys with non-human identities such as service accounts and AI agents. Instead, use workload identity federation, which allows pipelines to exchange short-lived, verifiable tokens for cloud access. Treat CI/CD runners like privileged users and only grant elevated permissions during tightly scoped deployment windows, with automatic revocation and full audit trails once the task completes. For legacy systems that require passwords, use a centralized vault to automate rotation and monitor for machine anomalies to trigger automated revocation.

5. Implement the “Break Glass” Protocol (aka, Emergency Access)

Pre-configure highly privileged roles (e.g., Emergency-Admin) that remain inactive and locked during normal operations, and use ABAC so these roles can only be activated if specific environmental conditions are met. The moment a “Break Glass” procedure begins, the organization must go on high alert. Check for a high-fidelity trail during this event for traceability.

Strengthen Your Cyber Resilience with Data Governance

Security failures rarely come from a single misconfiguration. They come from unmanaged access that quietly accumulates over time. In cloud- and supply-chain-heavy environments, resilience depends on eliminating unnecessary access before it becomes an entry point, rather than reacting after the fact.

Apono is built on the principle that identity is the new perimeter. Instead of relying on standing privileges, every human user and NHI gets just-enough access, just-in-time, and only for a clearly defined purpose.

Data governance starts with ownership, but it only works when it’s enforced automatically. Apono turns governance from policy into practice by eliminating standing access, automating approvals, and providing audit-ready visibility across your entire stack. Book a live demo and eliminate standing permissions across your entire stack without slowing developers down.