How Zero Standing Privileges Defuses the Shadow AI Agent Problem

Gabriel Avner

April 29, 2026

As more organizations move past experimentation and start planning real AI agent deployments, the same set of concerns keeps surfacing in our conversations with security teams. Whether the worry is a shadow agent that shows up uninvited or a sanctioned agent going rogue, the questions tend to cluster around control:

- How do we keep agents inside the lines we’ve drawn?

- What happens when a shadow agent we didn’t sanction shows up in the environment?

- What if one of our agents gets manipulated, hallucinates, or just goes off-script and tries to do something it shouldn’t?

These are the right questions to be asking, and they share a common answer that’s more concrete than most people expect.

AI agents are only as dangerous as the privileges they can reach. Move to a Zero Standing Privileges model, route every privilege request through a single control plane that grants access just-in-time and just-enough for the task, and most of the worry goes with them.

That’s the whole game. Let’s unpack why it works.

Why AI agent risk lives at the privilege layer

The concerns above feel scary because they show up in real, painful ways. Three patterns we see again and again:

Shadow agents. Your engineers are running Copilot, Cursor, and Claude Code right now. Those tools inherit your developers’ full credentials, and most security teams have very little visibility into what they’re actually doing on their behalf.

We wrote about why static privilege models break down in this world in more detail here.

Agents that can burn the house down. Amazon’s own AI coding tool caused a 13-hour AWS outage. Their postmortem said the quiet part out loud: it was a privilege problem.

The agent had broader permissions than anyone realized, and once it went sideways there was nothing in place to slow it down. Replit’s coding agent did something similar to a production database earlier in the year.

Social engineering of agents. Agents trust their inputs. They can be manipulated through prompt injection, hallucinate their way into bad decisions, or get tricked into actions they were never supposed to take.

And they do it at machine speed, against real systems, with real consequences.

Notice what these have in common. The danger isn’t really the agent. It’s what the agent can reach.

Privilege is where you actually have leverage over AI agents

Here’s a thought experiment. Take away every privilege an agent has. What damage can it do?

None. It’s a chatbot that can’t touch anything.

Now hand it admin credentials to your production database. Now what?

This is why privilege is the place where security teams have the most leverage. Trying to control agent behavior at the agent layer is a fight without an end.

There are too many agents, too many models, too many ways they can be manipulated, and the field is moving too fast for that approach to hold up over time.

Privileges, on the other hand, are concrete. They live in your cloud, your databases, your code repos. You already know how to manage them. The shift is in how you grant them.

Our 2026 State of Agentic AI Cyber Risk Report found that 98% of security leaders have slowed agent deployments because of exactly these concerns.

They’re not being paranoid. They’re being responsible. The controls they have today were never designed for identities that act autonomously, at machine speed, on non-deterministic inputs. The encouraging part is that the missing piece, runtime privilege control, is something you can put in place now.

How Zero Standing Privileges changes the equation

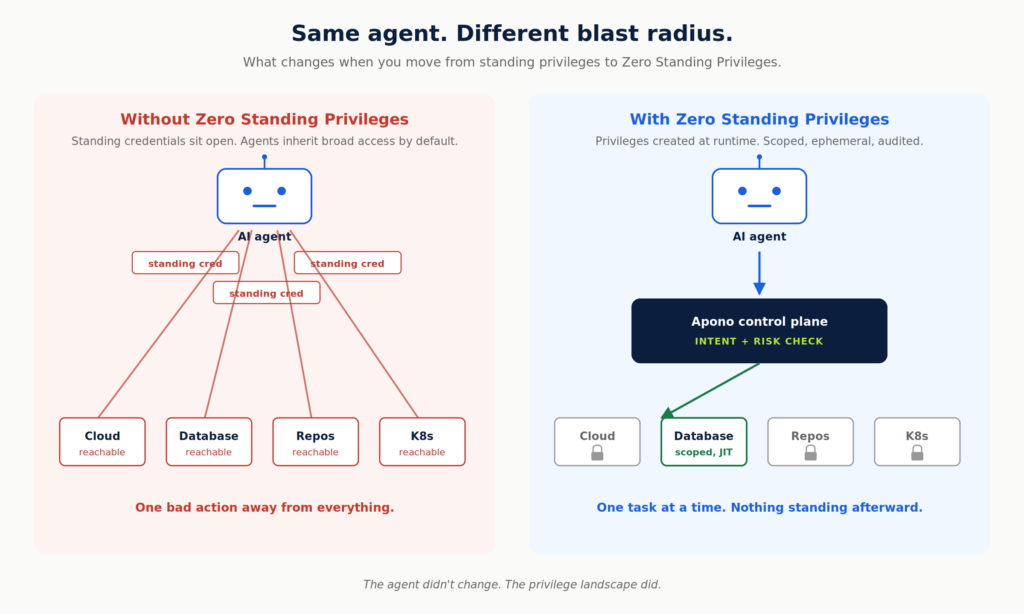

When privilege itself is created at runtime, scoped to the task, and destroyed the moment the work is done, you get ephemeral credentials granted just-in-time and just-enough for the work at hand. That shifts the agent risk story in some important ways:

- Standing credentials don’t exist for agents to inherit. That shadow Copilot can’t grab anything sitting around because nothing is sitting around.

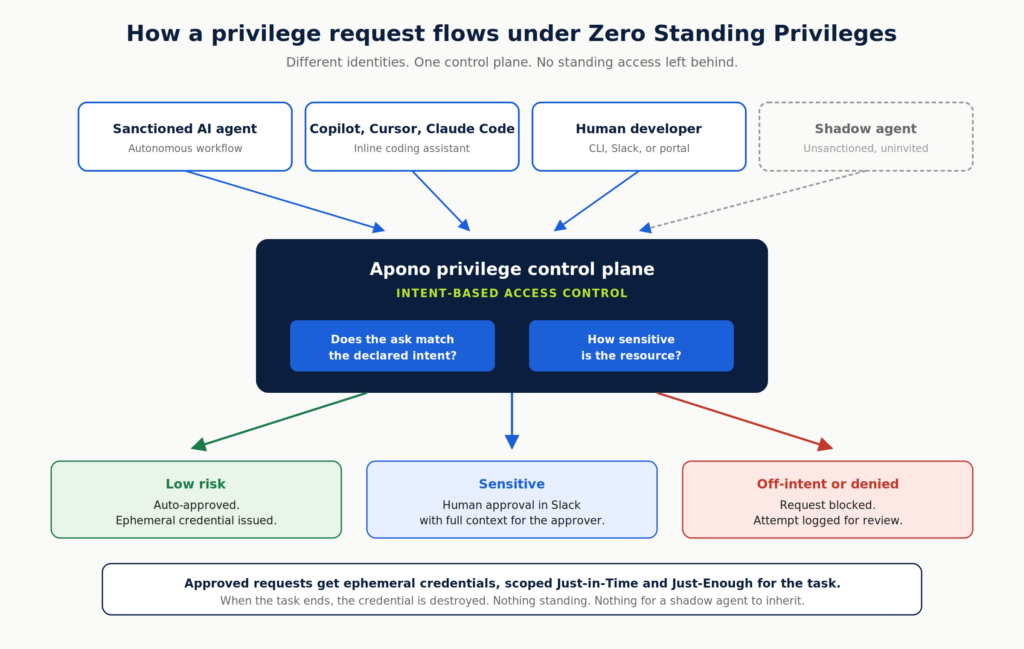

- Every privilege request flows through one control plane. Human, copilot, or autonomous agent, the path is the same. No credential gets minted outside of Apono.

- Risk and intent decide what flows and what stops. Low-risk reads happen automatically with no friction. Sensitive operations escalate to a human in Slack before they execute, with full context for the approver.

- Everything is logged end to end. Declared intent, the action that followed, the approval decision, the outcome, all tied back to the identity that started it.

This is also why the rogue AI agent scenario gets a lot less terrifying in practice. An agent that’s been manipulated or hallucinates its way into a destructive request still has to come through us to actually do anything.

Intent-based access control compares what the agent is asking for against what it said it was trying to do. If the request doesn’t match that declared intent, or if it crosses a sensitivity threshold, a human sees it before it executes. The blast radius collapses to whatever the agent legitimately needed in this moment, and nothing more.

The agent ends up being able to do more, not less, because the guardrail is intelligent rather than binary.

The bottom line for CISOs

You don’t need to wrangle every agent that shows up in your environment. You need to make sure the only path to anything sensitive runs through a control plane that understands what’s being asked and why.

Eliminate standing privileges, control privilege at runtime based on actual intent and risk, and you defuse the shadow AI agent problem before it has a chance to hurt you.

That’s what lets your team stop blocking agent deployments and start enabling them. The way to win with AI isn’t to slow it down. It’s to give it the right rails to run on.

Assess Your AI Agent Access Risk

AI agents are moving fast, but sensitive access should not be left to chance. Book a personalized assessment with Apono to identify where agent privileges may be creating hidden risk and how to put the right runtime controls in place before they become a security problem.