The Agentic Identity Crisis: Why Your AI Agents Are Your Biggest Identity Blind Spot in 2026

The Apono Team

April 7, 2026

An intern gets admin access to production for a temporary task, but nobody remembers to revoke it. Imagine that intern works at machine speed, never sleeps, and can chain dozens of actions before you’ve read the Slack ping—and has no instinct for when they’re about to do something irreversible.

That’s an AI agent in production. For many organizations, it’s already inside the environment with access that’s broader than anyone would approve for a human. Agentic AI is the most significant identity security problem that most security teams aren’t treating as one: 100% of organizations agree attacks on agentic AI workflows would be more damaging than traditional cyberattacks. Without focusing on the agentic identity crisis, you’re unlikely to recognize what actually needs to change before compliance frameworks and threat actors force your hand.

Why Agents Break Traditional Identity Assumptions

For the past few years, the identity security conversation has finally started paying attention to non-human identities (NHIs). Service accounts, API keys, OAuth tokens, CI/CD credentials: the identities that keep the stack running.

The ratio of machine identities to human identities in a typical enterprise is now as much as 150:1, and a large share of those machine identities sit outside identity providers. They’re scattered across cloud providers and SaaS tooling.

Visibility is fragmented, and lifecycle management is mostly an afterthought. Permissions are overly broad because nobody wants to be the person who broke the deployment pipeline on a Friday afternoon. AI agents inherit all of those problems and then add several of their own.

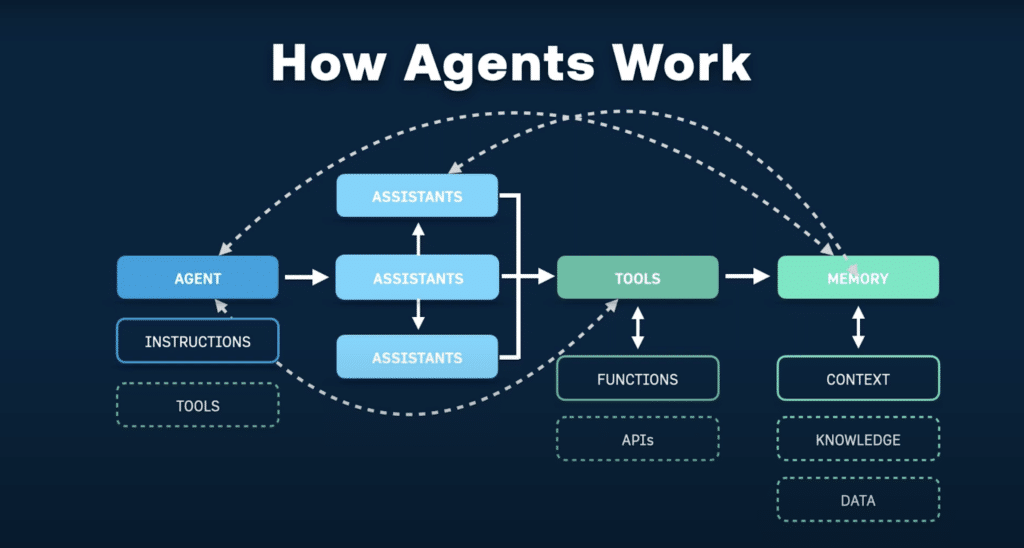

The gap between NHIs and agents is mostly behavioral. A service account is usually built for a narrow purpose, and its behavior is relatively predictable. An API token typically enables a bounded set of calls. Most of these identities are meant to be bounded, even when the permissions aren’t. You can anticipate what they’ll do because they don’t make decisions—they execute instructions.

Agents are different. They plan and take sequences of actions toward a goal. They can call external APIs, read from databases, write to storage buckets, trigger downstream agents, and update records across SaaS platforms. They might do all of that in one task run. Once agents start chaining calls across internal and third-party systems, API security becomes part of your identity problem, not a separate track.

When they hit ambiguity, they improvise, and that improvisation becomes actions in production. Critically, they chain actions across systems in ways that haven’t been reviewed. Often, the methods they use were not anticipated when you granted the permissions.

McKinsey’s agentic AI security playbook makes the point well. The risk shifts when you move from systems that enable interactions to systems that drive transactions. A compromised agent can execute business logic at scale without a human as part of the process to notice something looks wrong.

Static, long-lived permissions weren’t designed for this. Role-based access control (RBAC) helps organize access, but it still doesn’t solve intent- and task-scoping. Behavioral analytics can help you spot anomalies. But neither fixes the core issue. Both still assume the identity behaves within a recognizable pattern.

Agent behavior is goal-directed, not role-directed, which means required access is a function of what they’re trying to accomplish, not what category they’ve been assigned to. Over-scoped permissions, unintended data exposure, and cross-system chaining are the most consistent patterns appearing in AI cyber risk reports.

How Agent Deployments Quietly Accumulate Risk

A team wants to build an agent that can handle customer data requests. It needs read access to the CRM, write access to a Jira board, and the ability to query a database.

The engineer shipping it doesn’t want to spend two weeks debugging permission errors, so they grant broader access than strictly necessary and tell themselves they’ll scope it down after the proof of concept (POC). That POC then becomes a production agent, and the permissions never get revisited.

This is a predictable outcome of an access model that makes least privilege painful. When role definitions are coarse or when there’s no tooling to create just-enough permissions on demand, overprivileging is the path of least resistance. The problem is that with agents, the blast radius grows because execution is faster and actions span more systems.

Add multi-agent architectures into the mix, where agents are orchestrating other agents and passing data between them, and a single compromised or misconfigured agent could move laterally across your stack. This is also where agentic pen testing starts to earn its place, showing you what an agent can do end-to-end when something goes wrong.

80% of organizations have already encountered risky behaviors from AI agents, including unauthorized system access and improper data exposure. Plus, only 21% of organizations say their organization feels prepared to manage attacks involving agentic AI or autonomous workflows. That number is not going to go down as agent deployments scale.

Cross-agent task escalation is an emerging risk class of its own. One compromised agent can abuse trust assumptions to get another agent to do something it shouldn’t. Chained agents mean that a vulnerability at step one of a workflow propagates through every subsequent step. By the time anyone notices something went wrong, the damage will have spread.

Autonomous Systems Require Automated Governance

The access model for agentic AI needs to be built around a few foundational principles. These shouldn’t be advanced controls: they’re table stakes.

Make Access Ephemeral by Default

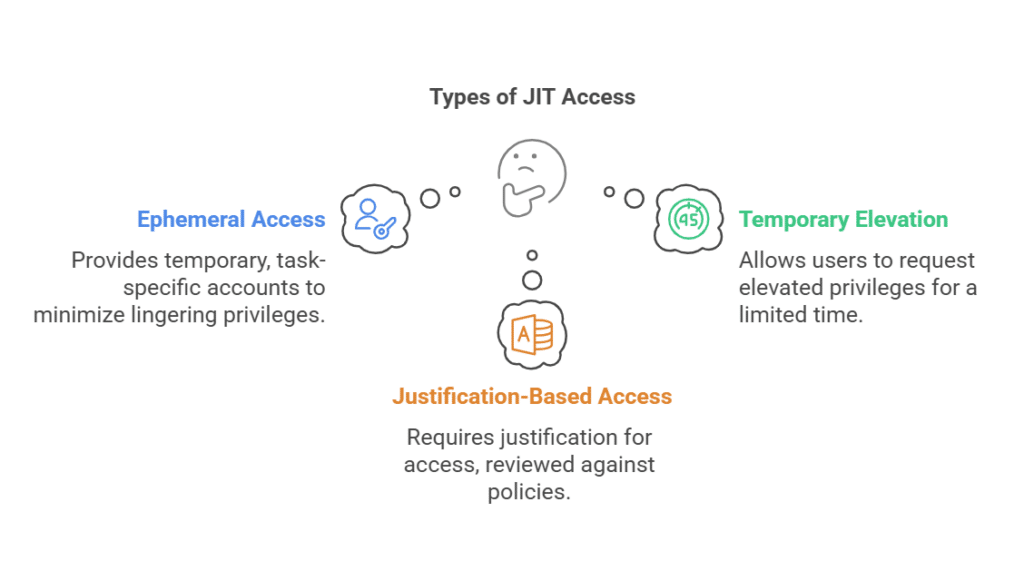

Access must be ephemeral and intent-aware. An agent that needs read access to a database for a specific task should receive exactly that access, scoped to that task, for the duration of that task, and nothing more. Just-in-time and just-enough privileges access are the minimum viable security model for autonomous systems. This is what zero trust looks like in practice for agents: verify explicitly, grant the minimum, and remove access by default.

Log Intent, Not Just Activity

Audit trails have to capture task context and declared intent, not just API calls. If your current logging tells you that an agent made 400 API calls to your data warehouse between 2 and 3 AM, that’s not useful. You need to know what the agent was trying to do, what resources it requested, what it actually touched, and what it changed.

Define and Gate High-Risk Actions

High-risk actions need a human in the loop. An agent querying a read-only endpoint is different from an agent deleting records or triggering a payment. Defining which actions cross a risk threshold and requiring human authorization for those specifically is how you avoid the kind of incident that ends careers and regulatory standing.

The key is to define those actions in advance and require explicit, time-bound approval for them. And that approval needs to happen where teams already work, not in a multi-day ticket queue. Self-serve workflows in Slack, Teams, or CLI make it possible to introduce human oversight without slowing down engineering.

Manage Agent Access as a Lifecycle

Treat agent access as a lifecycle: onboarding, scoped permissioning, continuous review, and fast revocation when scope changes. The same principles that apply to human identity lifecycle management, provisioning, review, and revocation apply to agents. And they have to run at machine speed, with automation enforcing policy by default, not reminders in a ticket queue.

Treat your AI agents as highly capable, deeply untrustworthy interns. They’re smart enough to be dangerous, and they have absolutely no instinct for when they’re about to do the wrong thing. This is the same operational discipline security teams are already trying to build with machine identity management, except agents force it to happen at machine speed.

Least Privilege Still Applies to Agents

The regulatory and compliance environment hasn’t caught up to agentic AI, yet. But it’s moving fast, and theframeworks that govern identity and access today are broad enough to apply to agent access right now. Agents also change what ‘third-party access’ means in practice, which is worth reflecting in your vendor risk assessment, especially when agents can pull data from or push actions into external platforms.

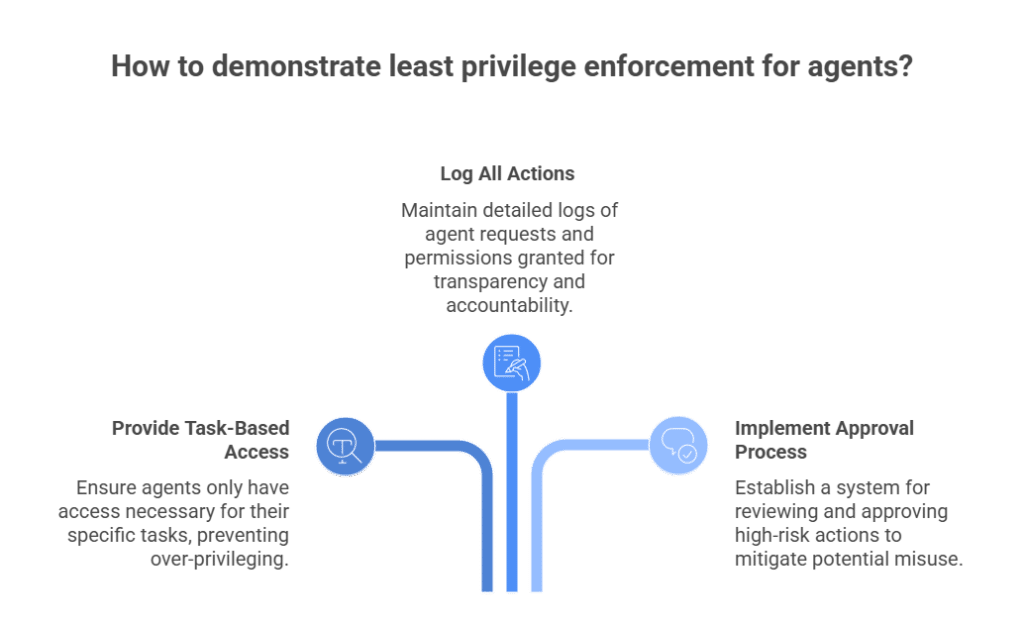

For example, SOC 2’s CC6 controls around logical access don’t specify that access must be granted to humans. If an agent has standing access to sensitive resources, that’s a least privilege violation regardless of whether the auditor asks about it explicitly.

The more interesting question is what happens when frameworks start asking about agents explicitly. The signs are there that auditors are probably already considering these things, even if the frameworks haven’t officially been updated yet. The Forbes Tech Council noted that organizations are accumulating what they’re calling an“identity tax,” which is the compounding cost of unmanaged identities. Agents accelerate that tax because they multiply and act quickly.

When an auditor asks you to demonstrate least privilege enforcement for your agent workforce, “we gave it a role” is not going to be enough. Evidence needs to show that access was bounded by the task. Log entries need to include what the agent requested, why, and verification that permissions were not left standing. Auditors will also want proof that team members are checking and approving high-risk actions. That’s what compliance-ready agent access governance looks like.

Organizations that wait for explicit regulatory pressure to address this will be doing access remediation under a deadline, which is where mistakes happen. The companies building agentic access governance now are not doing it because of current regulatory requirements. They’re doing it because the alternative is scrambling to retrofit controls onto a sprawling agent estate under deadline pressure, which is exactly when mistakes slip through.

Operationalizing Agent Access at Scale

The identity security conversation has been shaped for decades by the assumption that the identities you’re governing are human, or at least human-like in their behavior. Agents break that assumption. They’re systems that operate other systems, autonomously, at scale, and often across trust boundaries.

The organizations that will navigate this well are the ones that treat agent access as a new (and very dangerous) risk surface requiring continuous, contextual, automated governance. The only sustainable answer is continuous, contextual, automated access governance, with JIT and JEP as the default. If you’re already deploying agents into production workflows, it’s not a 2027 problem. It’s a 2026 control gap.

Apono helps security and platform teams automate just-in-time, just-enough access across their entire stack, so agents get exactly what they need, for exactly as long as they need it, with full auditability and human gates where the risk demands it. If you’re deploying agents into production workflows, now is the time to modernize how you govern their access.

See how Apono’s Agent Privilege Guard gives security teams runtime privilege controls for the agentic era, with ephemeral access and intent-aware enforcement. Or, book a demo to see how Apono enforces task-scoped access for humans and AI agents, without slowing your teams down.