Non-Human Identity Sprawl Is the Hidden Cost of AI Velocity

The Apono Team

March 25, 2026

In the current AI boom, we race to use copilots, orchestration scripts, CI workflows, retrieval pipelines, and background jobs. Sometimes, we take for granted that every one of these things needs an identity. Service accounts. OAuth apps. API keys. Short-lived tokens.

As AI velocity increases, so does the number of these non-human identities (NHIs). Instead of obsessing over model quality, latency, hallucinations, and GPU costs, we also need to consider how these identities impact security. Every agent you spin up carries credentials, and every credential carries permissions.

Multiply that across environments, pipelines, and integrations, and AI poses a big identity sprawl problem. Then, you get the hidden cost of AI velocity: identity sprawl that security teams can’t realistically govern with tickets and quarterly reviews.

What NHI Sprawl Actually Looks Like in 2026

To understand NHI sprawl today, it’s worth pausing for a moment to think about what this sprawl looked like just before the AI era.

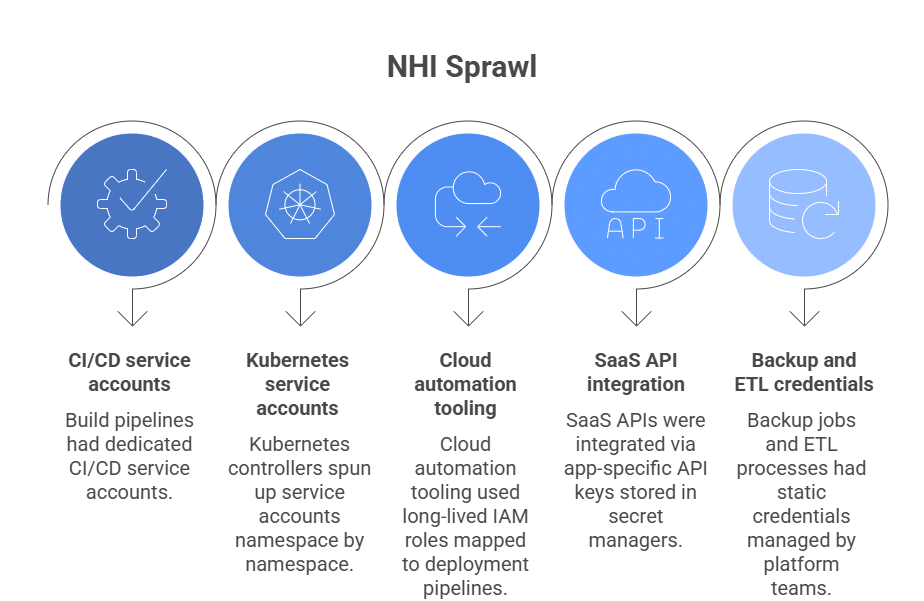

Five years ago, non-human identities were already quite abundant. But the patterns were predictable:

- Build pipelines had dedicated CI/CD service accounts.

- Kubernetes controllers spun up service accounts namespace by namespace.

- Cloud automation tooling used long-lived IAM roles mapped to deployment pipelines.

- SaaS APIs were integrated via app-specific API keys stored in secret managers.

- Backup jobs and ETL processes had static credentials managed by platform teams.

That was sprawl, but it was manageable sprawl. There were usually clear owners (platform, DevOps), predictable lifetimes, and manual reviews tied to release cycles or audits.

AI changed the rate and diversity of identity creation. The pattern many companies now face is:

- Every AI agent/platform needs API access to internal services, CRM, observability, messaging, etc.

- Every automation pipeline spun up to support those agents needs tokens, often embedded in CI/CD workflows.

- Every orchestration layer generates its own principals to schedule, coordinate, and execute jobs.

- Every multi-stage job expands credential usage across environments.

- Every “agent chain” creates implicit privilege inheritance across systems.

What used to be a handful of long-lived service accounts becomes hundreds or thousands of specialized machine identities scattered across your stack. And the scale is no longer hypothetical: machine identities outnumber human identities by 80:1 in enterprise environments.

When AI Velocity Meets Identity Governance

Before the AI wave, identity growth in most environments was relatively linear. New services were introduced deliberately. IAM roles were defined around stable workloads. Service accounts were usually tied to long-lived systems. Even if sprawl existed, it evolved at a pace humans could periodically review.

Governance could at least attempt to map identities to systems and owners.But AI velocity collides with typical governance efforts built around inventories, tickets, and periodic certifications.

That’s why this collision creates real cybersecurity risk:

- Privilege drift accelerates. Permissions expand faster than they’re reviewed.

- Blast radius increases. Machine identities often span systems humans never touch directly.

- Detection becomes harder. Machine-to-machine traffic looks normal by default.

- Abuse scales instantly. API-level access means no console login is required.

Why Security Can’t Keep Up

Security can’t keep up because identity governance is still designed around reviewable objects, while AI turns identity into a byproduct of automation. Identities aren’t “created and reviewed.” They’re generated continuously by pipelines and runtime systems.

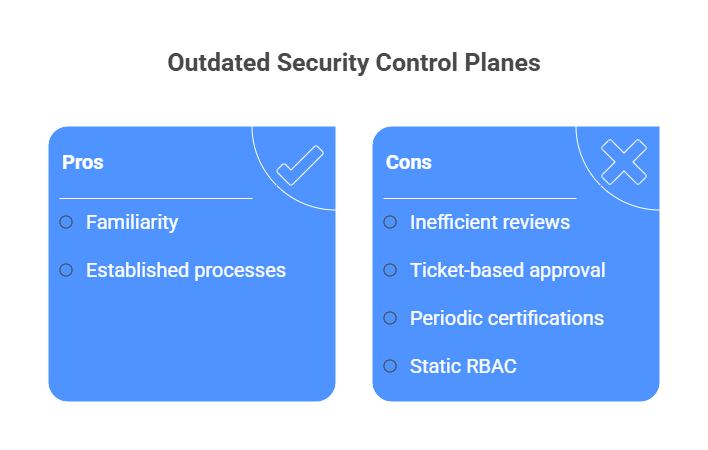

And yet the control plane hasn’t evolved. Security teams often still:

- Review role assignments after deployment

- Approve access via tickets

- Run periodic certifications

- Maintain static RBAC models that assume stability

The other structural problem is visibility. Modern AI stacks rely heavily on short-lived tokens issued via OIDC or STS. Credentials churn rapidly, but privilege is still defined at the role, service account, or OAuth-scope layer, and that layer persists.

Security tooling frequently shows what roles exist, but not whether they’re being exercised appropriately, by which workload, for which task, right now.

Compliance doesn’t fix this. You can document least privilege and prove access reviews happened. But if identity creation is embedded in CI/CD and runtime federation, then governance evidence will always lag behind the actual access picture. Many organizations formalize identity oversight within a broader cybersecurity risk management plan, but documentation alone doesn’t address runtime privilege drift in AI-heavy environments.

Zero Trust Paired With Non-Human Identity Controls

In practice, most organizations interpret zero trust as stronger authentication for humans, tighter network segmentation, and conditional access policies. That model assumes people are the primary actors inside systems. Those are important, but they still tend to be human-first.

In AI-heavy environments, the point is that humans aren’t the dominant actors anymore. If identity is the new perimeter, then non-human identities are now the dominant traffic crossing it. Zero trust without explicit NHI governance leaves your highest-volume lane under-controlled.

Just as product development lifecycles require structured governance from ideation through release, identity lifecycles demand the same rigor, from creation to privilege assignment to revocation.

1. From Identity Management to Real-Time Identity Orchestration

Traditional identity management focuses on provisioning and deprovisioning. A user is assigned a role; a service account is granted permissions. The system trusts that assignment until someone revisits it.

That model assumes identities are relatively static. AI systems don’t operate in static environments. They federate across clouds and SaaS platforms. They redeploy frequently, and they rely on ephemeral workloads that request credentials dynamically.

So the control model must shift from managing identities as objects to governing access as runtime decisions. Real-time identity orchestration means:

- Evaluating machine access continuously, not just at creation.

- Governing both human and non-human identities under the same enforcement framework

- Treating identity decisions as runtime events rather than admin tasks.

- Instead of asking, “What does this role have?” the system asks, “Should this identity perform this action right now, in this environment, for this task?”

2. Credential Half-Life Is Collapsing

On paper, this sounds like it should lead to improved lower risk. After all, short-lived credentials reduce exposure windows. But while credentials expire quickly, privilege scopes often remain broad and persistent.

The role still exists, the service account still holds cross-system permissions, and the OAuth integration still has sweeping API scopes.

This creates a new threat profile where:

- Short-lived tokens can still be replayed within their validity window.

- Over-permissioned roles are exercised continuously.

- Bot-driven API abuse happens at machine speed.

- Attackers don’t need long-lived keys if they can repeatedly obtain short-lived tokens via a compromised workload, OIDC misconfig, or stolen CI identity.

Credential half-life is shrinking, but authorization half-life often isn’t; that asymmetry demands runtime controls.

3. Dynamic, API-Level Enforcement

Traditional security controls often sit at the network layer: proxies, gateways, firewalls. But most AI-driven interactions happen via APIs.

Dynamic, API-level enforcement means:

- Evaluating identity context at the moment of the call.

- Inspecting action types.

- Applying policy based on workload type, environment, and requested operation.

Rather than just verifying the token is valid, you verify the action is justified.

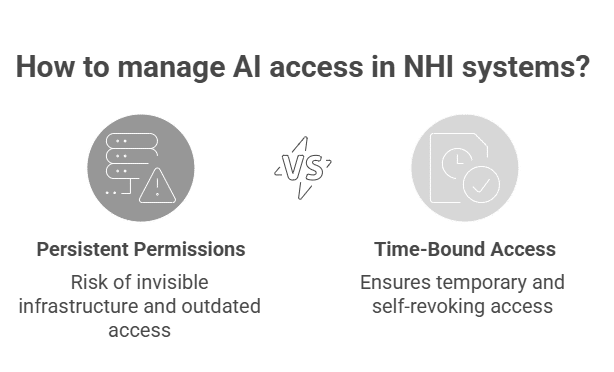

4. Auto-Expiring Access as the Default State

Standing privileges are handy, but they’re also dangerous. In AI-heavy systems, persistent permissions become invisible infrastructure. Service accounts retain write access long after the original need has faded. OAuth tokens sit embedded in integrations that no one revisits.

Auto-expiring access should be the default for NHIs. If an AI agent needs temporary cross-system access to perform a task, that access should be time-bound and self-revoking.

5. Contextual, Task-Scoped Permissions

An AI enrichment agent may need to read from one dataset, update specific records, and call a defined API endpoint. It rarely needs unrestricted write access across environments.

Contextual, task-scoped permissions limit identities to:

- Specific datasets.

- Specific API methods.

- Specific environments.

- Specific time windows.

Instead of granting “database write,” you grant “write access to this table during this job execution.” Granularity is the difference between “least privilege” on paper and least privilege in practice.

6. Agent-Specific Guardrails

Not all machine identities are equal.

A reporting agent shouldn’t share the same privilege boundary as a deployment pipeline. An inference endpoint shouldn’t hold infrastructure modification rights. You get the point.

Agent-specific guardrails formalize those boundaries. They codify what that class of NHI is allowed to do, and what it is explicitly forbidden from doing. Also, these guardrails enable continuous adversarial exposure validation by ensuring that AI-driven workflows cannot exceed their intended blast radius.

In AI ecosystems where agents chain together, this becomes critical. Without guardrails, privilege inheritance spreads invisibly across workflows. Guardrails enforce architectural intent.

7. Human Authorization for Disruptive Actions

Even in the AI-dominated world we find ourselves moving towards, certain operations should never be fully automated without oversight. Think:

- Deleting datasets.

- Modifying production infrastructure.

- Granting additional privileges.

- Rotating encryption keys.

- Accessing high-sensitivity data stores.

Human-in-the-loop controls for disruptive actions create deliberate checkpoints where machine speed must slow down.

Gaining Back NHI Control at Machine Speed

Static roles, quarterly reviews, and spreadsheet-based IAM were never designed for machine-dominated environments. When AI agents can create, modify, and move data in seconds, access governance has to operate at the same cadence.

As long as service accounts, CI tokens, and AI agents retain persistent write access by default, governance will always lag reality. You can’t manage NHIs at machine speed if access decisions were made months ago and never revisited. If you want to understand where your exposure really sits, start with standing access.

Autonomous agents are moving into production environments faster than security teams can assess the risk. Learn more about how to manage your agents’ access using our Agent Privilege Guard, and book a demo here.